Key Takeaways

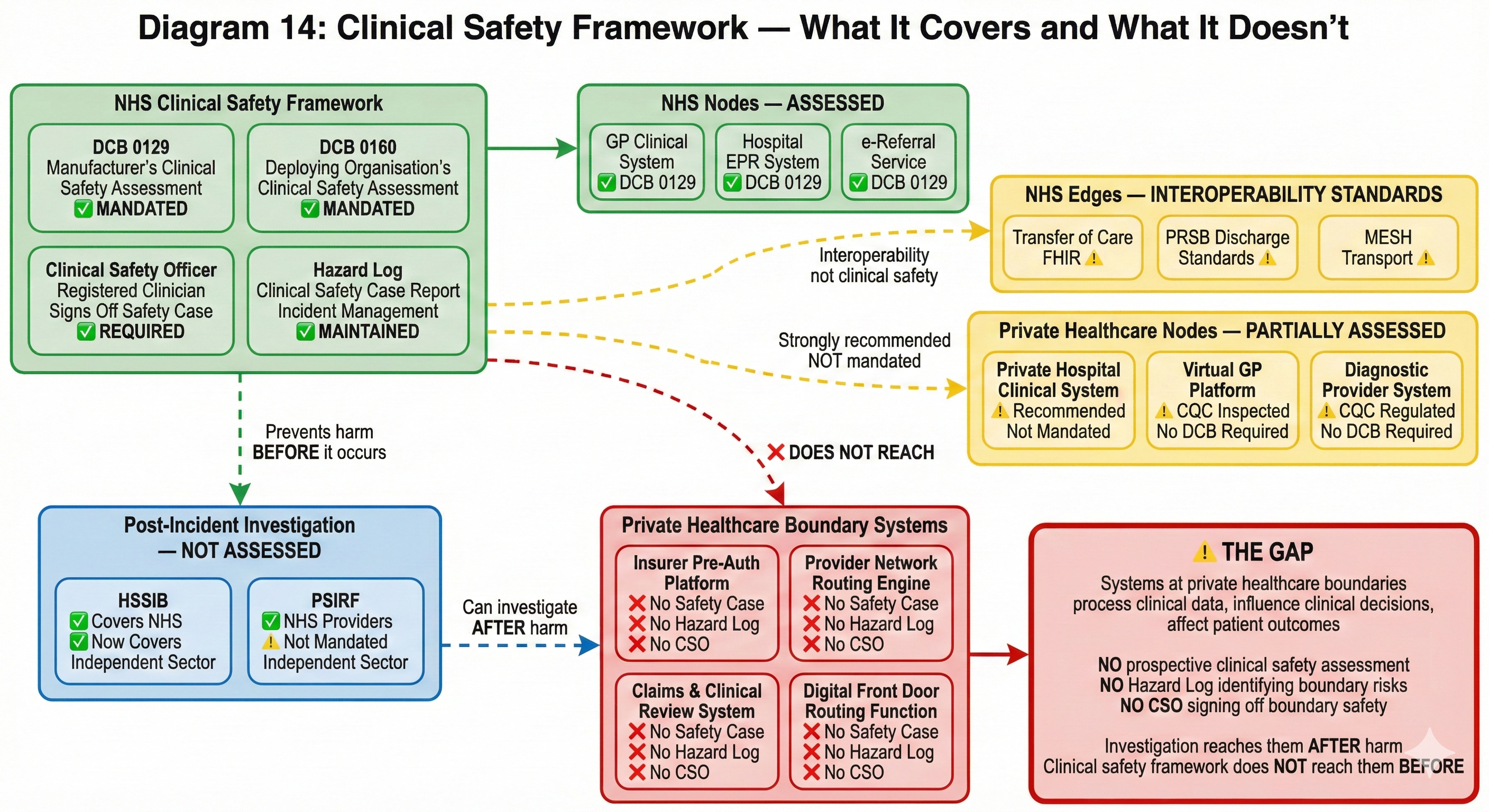

- The UK’s clinical safety framework (DCB 0129/0160) is one of the most rigorous in the world — but it applies to nodes, not edges. Systems that are ‘totally privately funded’ fall outside its mandatory scope.

- Four systems at private healthcare boundaries have no Clinical Safety Case: the pre-authorisation platform, the provider network routing engine, the digital front door triage system, and the claims/clinical review system.

- HSSIB can now investigate patient safety incidents in the independent sector — but private healthcare lacks the prevention layer (prospective clinical safety assessment) that sits before the incident.

- The Paterson case was a boundary crossing failure that nobody assessed. A Hazard Log asking what happens when clinical information fails to cross between settings would have identified the structural risk.

- The DCB standards review is underway. Two critical questions: should scope extend beyond publicly funded services, and should standards assess boundaries as well as nodes?

This is the sixth article in a series examining boundary governance in private healthcare. Previous articles established the structural governance gap at every organisational boundary, mapped the ungoverned constellation of insurer provider networks, examined the clinical-commercial boundary where medical necessity meets policy wording, documented the NHS-private interface where two constitutional domains meet without a governed crossing, and traced the digital front door where clinical consultations become commercial pathways. This article examines why the UK's clinical safety framework — one of the most rigorous in the world for the systems it covers — does not reach the boundaries where patients are most exposed.

The NHS has a clinical safety framework that no other health system has replicated at scale. Two information standards — DCB 0129 for manufacturers and DCB 0160 for deploying organisations — mandate a systematic approach to identifying, assessing, and mitigating clinical risks arising from digital health technologies. Every health IT system used in publicly funded care in England must have a Clinical Safety Case, a Hazard Log, and a named Clinical Safety Officer who signs off that the system is acceptably safe for patient care.

This framework exists because the consequences of getting digital health wrong are clinical, not just technical. A system that misroutes a referral, corrupts a medication record, or fails to transmit a critical alert does not produce a software bug. It produces patient harm. DCB 0129 and 0160 exist to ensure that someone — a registered clinician with specific training in clinical risk management — systematically considers what could go wrong and documents how those risks are controlled.

The framework is mandated under Section 250 of the Health and Social Care Act 2012. It applies to digital products that are publicly funded for use in England and that influence the real-time or near-real-time direct care of patients. NHS England's own step-by-step guidance makes the scope explicit: products that are “totally privately funded (developed and deployed) fall outside of the section 250(6) of Health and Social Care Act 2012” — though NHS England “strongly recommend” that DCB 0129 and 0160 are adopted.

Strongly recommended is not mandated. And for the systems that sit at the boundaries this series has examined — the insurer's pre-authorisation platform, the provider network routing engine, the digital front door triage system, the clinical-to-commercial data crossing — strongly recommended means, in practice, absent.

What clinical safety actually requires

Before examining where the framework does not reach, it is worth understanding what it requires where it does.

A manufacturer of a health IT system must, under DCB 0129, establish a Clinical Risk Management System — a documented set of processes for identifying clinical hazards, assessing their severity and likelihood, defining controls, and recording all of this in a Hazard Log. The Hazard Log is not an abstract compliance document. It is a living record that asks, for every function of the system: what could go wrong clinically? How bad could it be? How likely is it? What controls prevent it? Is the residual risk acceptable?

The Clinical Safety Case Report synthesises these assessments into a structured argument that the system is acceptably safe. It is reviewed and signed off by a Clinical Safety Officer — a registered clinician (GMC, NMC, or equivalent professional body) with specific training in clinical risk management. The CSO's role is not ceremonial. They are the named individual accountable for asserting that clinical risks have been systematically identified and managed.

Under DCB 0160, the deploying organisation must conduct its own clinical risk assessment, because the same system deployed in different clinical contexts creates different hazards. A referral management system used in a single NHS trust creates different risks from the same system used across an integrated care system. Context matters. The deploying organisation's CSO must assess the risks specific to their deployment and maintain their own Hazard Log.

This framework has over fifty specific requirements. It covers the full lifecycle of the system — from initial deployment through modification, upgrade, and decommissioning. It requires incident management processes, change control with clinical safety review, and ongoing monitoring. It is, by international standards, rigorous.

And it applies to nodes. Not to edges.

The gap: clinical safety stops at the organisational boundary

DCB 0129 assesses the clinical safety of a health IT system. DCB 0160 assesses the clinical safety of deploying that system within a health organisation. Neither standard asks: what happens when clinical data crosses from one system to another, from one organisation to another, from one governance framework to another?

This is not an oversight in the standards. It reflects the environment in which they were designed. Within the NHS, the assumption has been that interoperability standards — Transfer of Care FHIR specifications, PRSB standards, MESH transport, NHS number as definitive identifier — would govern the crossings between systems. Clinical safety of each node, interoperability standards for the edges. Two complementary frameworks covering the full pathway.

The assumption was always incomplete — even within the NHS, interoperability standards are inconsistently implemented, and the Oskrochi et al. study published in October 2025 surveying all NHS organisations found that despite DCB 0129 and 0160 being legal requirements, no public data previously existed on how many digital health technologies were actually in use or how many had been properly assured. The study revealed significant compliance gaps even within the publicly funded system where the standards are mandatory.

But in private healthcare, the assumption collapses entirely. There is no Transfer of Care FHIR specification governing how clinical data moves between private providers. No PRSB standard mandating discharge summary content from private hospitals. No MESH transport connecting private systems. No interoperability framework at all. And DCB 0129 and 0160 — the clinical safety standards that govern the nodes — are not mandated for systems that are entirely privately funded.

The result is a patient pathway where neither the nodes nor the edges have mandatory clinical safety assessment.

Systems at private healthcare boundaries that have no clinical safety case

Consider the digital systems that sit at the boundaries this series has examined. Each makes decisions with clinical consequences. None is required to have a Clinical Safety Case, a Hazard Log, or a Clinical Safety Officer.

The insurer's pre-authorisation platform. When a consultant requests authorisation for a treatment, clinical information crosses from the clinical domain into a commercial platform. The platform's logic determines what information is captured, what criteria are applied, and what decision emerges. Article 3 showed that pre-authorisation is a clinical-commercial crossing: clinical reasoning enters, a commercial decision with clinical consequences exits. The platform that mediates this crossing is a health IT system in every functional sense — it processes clinical data, influences clinical decisions, and affects patient outcomes. Under what clinical safety framework has the hazard been assessed that a pre-authorisation platform's information architecture might strip clinical context from a consultant's recommendation, leading to an authorisation decision that is commercially rational but clinically unsafe? Under what Hazard Log is that risk recorded? Which Clinical Safety Officer has signed off that the residual risk is acceptable?

The provider network routing engine. Article 2 showed how insurers route patients through provider networks using algorithmic ranking — Bupa's Platinum tier, Vitality's 500+ data point system, AXA's Fast Track. These routing decisions determine which specialist the patient sees, which facility they attend, and by extension what clinical expertise is available to them. The routing algorithm is a health IT system that influences direct care. If it routes a patient to a specialist whose expertise does not match the patient's clinical complexity, that is a clinical harm caused by a digital system. No DCB 0129 assessment has examined the clinical hazards arising from algorithmic specialist selection. No Hazard Log records the risk that network constraints might prevent a patient accessing the most clinically appropriate specialist.

The digital front door triage platform. Article 5 documented how virtual GP platforms integrated with insurer pathways create the first boundary crossing — clinical data entering commercial infrastructure. The platform determines the clinical assessment, generates the referral, and routes the patient into the insurer's network. It also creates the one-way valve: it can refer into the insured pathway but cannot refer to the NHS via e-Referral Service. The clinical safety of the consultation itself may be assessed — CQC inspects the clinical service. But the clinical safety of the routing decision — the system that determines where the patient goes after the consultation, what information accompanies them, and what happens when the insured pathway cannot serve their clinical needs — has no clinical safety framework governing it.

The claims and clinical review system. When an insurer's clinical team reviews a case during ongoing treatment — assessing whether continued care meets medical necessity criteria, whether a different treatment pathway should be substituted, whether a patient should be transferred to a different provider — they are using a digital system to support decisions with direct clinical consequences. The system structures what information is presented, what comparisons are available, what decision pathways are offered. It is a health IT system supporting clinical decision-making. It has no DCB 0129 Clinical Safety Case.

These are not peripheral systems. They sit at every significant boundary in the insured patient pathway. They process clinical data. They influence clinical decisions. They affect patient outcomes. They are, functionally, health IT systems used in the direct care of patients. But because they are privately funded, deployed within FCA-regulated entities, and sit outside the NHS governance framework, they fall outside the mandatory scope of clinical safety standards designed specifically for this purpose.

Do your boundary systems have a Clinical Safety Case? Check where your organisation sits on the governance spectrum.

Check Your Boundary Risk ScoreThe regulatory gap is not theoretical

The Paterson Inquiry, published in February 2020, described a “healthcare system which proved itself dysfunctional at almost every level.” The Inquiry made fifteen recommendations, including that the government should ensure arrangements “are applicable across the whole of the independent sector (private, insured and NHS funded), as a qualifying condition for independent sector providers to do NHS work.”

Five years later, as ITV News reported in February 2025, not all recommendations have been implemented. The government's only published progress update came in December 2022 — more than two years ago. The government stated it was “working urgently to implement the remaining Paterson recommendations.” Urgently, in this context, means a five-year implementation period with no published update for the second half of it.

What Paterson exposed was not primarily a failure of individual clinical competence. It was a failure of boundary governance. Paterson practised across the NHS and the independent sector simultaneously. Information about concerns raised in one setting did not cross to the other. Governance frameworks that would have identified his outlying practice in an NHS-only context failed when his activity was distributed across organisational boundaries that nobody governed as crossings.

A clinical safety assessment of the boundary between NHS and independent sector practice — a Hazard Log that asked “what happens when clinical information about a consultant's practice in one setting fails to reach governance processes in the other?” — would have identified the structural risk that Paterson exploited. The hazard was not hidden. It was a boundary crossing that nobody assessed.

The Medical Practitioners Assurance Framework (MPAF), published by the Independent Healthcare Providers Network in 2019, addresses clinical governance for individual medical practitioners in the independent sector. CQC continues to assess clinical governance within provider organisations. Both are necessary. Neither governs the crossing between organisations. The MPAF covers the node — the practitioner's governance within each organisation. It does not cover the edge — the boundary between organisations where information, responsibility, and clinical risk transfer.

HSSIB now reaches the independent sector. Clinical safety standards do not.

One significant post-Paterson development is the establishment of the Health Services Safety Investigations Body (HSSIB) as an independent statutory body in October 2023. Under the Health and Care Act 2022, HSSIB's remit explicitly extends to investigating patient safety incidents in the independent sector — not just NHS-funded care. This is a material change. For the first time, an independent investigations body with statutory powers, including powers of entry and the ability to compel cooperation, can investigate patient safety incidents wherever they occur in England's healthcare system.

The IHPN reported in February 2025 that it had been collaborating with HSSIB throughout 2024, facilitating access to training programmes for independent sector providers and sharing insights to support HSSIB's investigations. This is constructive and necessary engagement.

But it creates an asymmetry. HSSIB can now investigate patient safety incidents in private healthcare. It can examine what went wrong, identify systemic causes, and recommend improvements. What private healthcare does not have is the framework that sits before the incident — the systematic, mandated process of identifying clinical hazards, assessing risks, implementing controls, and documenting them in a Hazard Log that is reviewed by a Clinical Safety Officer before harm occurs.

Within the NHS, the relationship is complementary. DCB 0129 and 0160 require prospective clinical risk management — identifying and mitigating hazards before they cause harm. The Patient Safety Incident Response Framework (PSIRF) provides the retrospective framework when incidents occur. HSSIB investigates systemic issues. Three layers: prevent, respond, investigate.

In private healthcare, the investigation layer now extends to the independent sector. The response layer exists within individual CQC-regulated providers. The prevention layer — systematic, mandated clinical safety assessment of the digital systems that influence patient care — does not reach beyond publicly funded services.

HSSIB can investigate the clinical consequences of a pre-authorisation delay, a routing failure, or a data loss at a boundary crossing. It cannot require that the systems producing those consequences have been prospectively assessed for clinical safety before the harm occurs. The horse has bolted before anyone checks whether the stable door was ever built.

The DCB standards review: an opportunity that should not be missed

NHS England has commenced a review of DCB 0129 and DCB 0160 to ensure they “remain up-to-date, practical and aligned with the latest advancements in healthcare technology and clinical practice.” Focus groups on DCB 0129 have been completed. Focus groups on DCB 0160 followed during 2025. Proposed revisions will be subject to public consultation.

This review is necessary and welcome. The standards were designed for a health IT environment that looked very different from today's. Interoperability between organisations, cloud-hosted platforms serving multiple providers, AI-assisted clinical decision support, insurer-integrated care pathways — none of these featured prominently when the standards were last substantively revised.

Two questions are critical for the review's relevance to the boundaries this series has examined.

First: should the scope extend beyond publicly funded services? The Data (Use and Access) Act 2025 introduces mandatory information standards for IT suppliers to the NHS and lays groundwork for smart data schemes in health. As the legislative framework for health data governance evolves, the question of whether clinical safety standards should apply to all systems processing patient data for clinical purposes — regardless of funding source — becomes increasingly urgent. A system that processes a patient's clinical data to make a decision affecting their care creates the same hazards whether the patient is NHS-funded, insured, or self-paying. The clinical risk does not check who is paying before it materialises.

Second: should the standards assess boundaries as well as nodes? Currently, DCB 0129 assesses the manufacturer's system. DCB 0160 assesses the deploying organisation's use of that system. Neither assesses what happens when clinical data leaves one system and enters another — the boundary crossing where clinical information, clinical intent, and clinical responsibility transfer between organisations. A clinical safety framework that assesses nodes but not edges is a framework that governs the places where clinical risk is best understood and leaves ungoverned the places where clinical risk is highest.

What clinical safety at boundaries would actually look like

Clinical safety at organisational boundaries is not a conceptual abstraction. It is the application of the same rigorous methodology that DCB 0129 and 0160 already require — applied to the crossings between systems rather than to the systems themselves.

A boundary clinical safety assessment would begin with the same question the existing framework asks of any health IT system: what could go wrong clinically? But instead of asking this question about a system's functions, it asks about the crossing between systems. What happens when clinical information crosses from one organisation's governance framework to another's? What could be lost, corrupted, delayed, or misinterpreted? What are the clinical consequences?

The methodology is identical. The hazard identification process examines each function of the crossing — information transfer, identity reconciliation, consent verification, responsibility transfer, clinical intent preservation, routing decision, outcome feedback — and asks what could go wrong at each point. The risk assessment evaluates severity and likelihood using the same matrix. Controls are identified. Residual risk is assessed. The Hazard Log records everything. The Clinical Safety Officer reviews and signs off.

Consider what a boundary Hazard Log would contain for the pre-authorisation crossing examined in Article 3:

Hazard: Clinical context stripped during pre-authorisation submission, resulting in authorisation decision made without adequate clinical information.

Severity: Significant. Likelihood: Frequent.

Existing controls: Pre-authorisation form includes free-text field for clinical notes.

Residual risk: The free-text field is optional and character-limited; clinical context routinely omitted.

Risk level: Unacceptable without additional controls.

Required control: Structured clinical context fields mandated in pre-authorisation submission, with minimum dataset defined by clinical safety assessment.

Hazard: Responsibility gap between consultant's clinical recommendation and insurer's authorisation decision, with no defined framework for resolving clinical disagreements.

Severity: Major. Likelihood: Occasional.

Existing controls: Insurer's internal appeals process.

Residual risk: Appeals process resolves commercial disagreement, not clinical responsibility.

Risk level: Unacceptable without additional controls.

Required control: Defined escalation pathway resolving clinical disagreements on clinical grounds, with responsibility transfer documented and acknowledged by both parties.

These are not hypothetical hazards. They are the structural risks identified across Articles 2, 3, 4, and 5 of this series, expressed in the language and methodology of a framework the NHS has been using for over a decade. The methodology exists. The expertise exists. What does not exist is the requirement to apply them at the boundaries where clinical risk is highest.

The Clinical Safety Officer at the boundary

Within the NHS, the Clinical Safety Officer role is well-defined. The CSO must be a registered clinician with current professional body registration and specific training in clinical risk management. They are responsible for overseeing the clinical safety process, reviewing hazard assessments, and signing off that residual risks are acceptable.

At an organisational boundary, the CSO role becomes more complex and more critical. A boundary CSO must understand not just the clinical risks within a single system or organisation, but the risks that arise from the interaction between two different governance frameworks. They need to understand how clinical information degrades as it crosses from one data controller to another, how clinical responsibility transfers (or fails to transfer) between organisations, and how the governance gap between two regulatory frameworks creates hazards that neither organisation's internal processes would identify.

This is specialist expertise. It requires clinical knowledge, understanding of information governance, familiarity with the regulatory frameworks on both sides of the boundary, and the methodological rigour to assess risks that sit between organisations rather than within them. It is not a role that can be filled by simply extending an existing CSO's remit across an additional organisation. It is a distinct discipline — clinical safety at the crossing rather than clinical safety at the node.

The NHS does not currently train CSOs for this role, because the existing framework does not assess boundaries. The independent sector does not have CSOs at all in most cases, because the clinical safety standards are not mandated. The result is a gap in both capability and requirement: no framework requires boundary clinical safety assessment, and no established pathway develops the expertise to conduct one.

The commercial reality: governance as competitive advantage

The regulatory trajectory is clear. HSSIB's remit now covers the independent sector. CQC is recovering from its operational difficulties and restructuring under new leadership, with sector-specific Chief Inspectors and a target of 9,000 assessments by September 2026. The FCA's Consumer Duty requires insurers to demonstrate products deliver good outcomes and avoid foreseeable harm. The DCB standards review may extend scope. Paterson's recommendations remain partially unimplemented, with the government acknowledging the need for urgency.

Each of these regulatory pressures, examined individually, creates incremental compliance obligations. Examined together, they converge on boundary governance. HSSIB investigates incidents at boundaries. CQC assesses organisations but increasingly expects evidence of pathway safety. Consumer Duty asks whether the product — the insured pathway — delivers good outcomes across the entire journey. The DCB review asks whether clinical safety standards remain fit for purpose as healthcare technology evolves beyond single-organisation deployment.

Organisations that wait for each regulatory requirement to be individually mandated will find themselves perpetually catching up. Organisations that recognise the convergence and implement boundary clinical safety assessment now — that commission boundary Hazard Logs, appoint boundary CSOs, and document the clinical safety of their crossings before regulation requires it — will be positioned differently.

An insurer that can demonstrate to the FCA that its pre-authorisation platform has a Clinical Safety Case, with identified hazards, documented controls, and a named CSO who has signed off that the clinical risks at the boundary are acceptably managed, occupies a different regulatory position from one that cannot. A private hospital group that can show CQC that the crossings between its organisations — referral pathways, discharge processes, data transfers — have been clinically safety-assessed, with boundary Hazard Logs maintained by a qualified CSO, demonstrates a level of governance that the Well-Led framework values but does not yet specifically require.

This is not speculative commercial positioning. It is the same pattern that played out with data protection. Organisations that implemented robust data governance before GDPR enforcement gained competitive advantage, not because they were ahead of their competitors technically, but because they could demonstrate governance maturity when scrutiny arrived. The organisations that scrambled to comply after enforcement found the process more expensive, more disruptive, and less credible.

Clinical safety at organisational boundaries is the same opportunity. The methodology exists. The expertise exists. The regulatory convergence is visible. The question is not whether boundary clinical safety assessment will be expected. It is whether your organisation will have it in place before or after it is demanded.

Next in the series: The Seven Flows Applied to Insured Patient Pathways — the Seven Flows methodology applied to the full insured patient pathway, mapping each flow at every crossing in private sector vocabulary with a 7×5 maturity matrix that makes the governance gap measurable.

Private Healthcare Governance Series

- #1 Practising Privileges and the Governance Gap

- #2 The Provider Network as Ungoverned Constellation

- #3 The Clinical-Commercial Boundary

- #4 The NHS-Private Interface

- #5 The Digital Front Door

- #6 Clinical Safety at Boundaries (this article)

- #7 The Seven Flows Applied to Insured Pathways

- #8 The Regulatory Convergence

Related: Architecting Neighbourhood Health

The same boundary governance methodology applied to NHS multi-organisation networks — including the constitutional complexity of co-locating five independent organisations in a single building.